How to read an Excel file in Python

Learn how to read Excel files in Python. This guide covers various methods, tips, real-world applications, and how to debug common errors.

Python makes it simple to read and process Excel files, a frequent task for data analysis and automation. Its powerful libraries streamline the entire workflow from start to finish.

Here, you'll learn several techniques to handle Excel data. You'll get practical tips, see real-world applications, and receive advice to debug common issues you might encounter.

Using pandas to read Excel files

import pandas as pd

df = pd.read_excel('data.xlsx')

print(df.head())--OUTPUT--ID Name Age City

0 1 Alice 25 New York

1 2 Bob 30 Boston

2 3 Carol 22 Chicago

3 4 David 35 Seattle

4 5 Eve 28 Denver

The pandas library is the cornerstone of data analysis in Python. Its read_excel() function ingests an Excel file and converts its contents into a DataFrame. Think of a DataFrame as a powerful, in-memory version of your spreadsheet—a two-dimensional structure that organizes data into rows and columns for easy manipulation.

Calling df.head() is a quick way to inspect the first few rows. This step confirms that the data loaded correctly and matches your expectations before you proceed with any further analysis. It's a simple but crucial part of the data validation process.

Basic Excel reading techniques

While the basic read_excel() function is powerful, you'll often need to target specific sheets, rows, and columns or use a more specialized tool like openpyxl.

Reading specific sheets with pd.read_excel()

import pandas as pd

# Read a specific sheet by name

df1 = pd.read_excel('data.xlsx', sheet_name='Sheet2')

# Read a specific sheet by index (0-based)

df2 = pd.read_excel('data.xlsx', sheet_name=1)

print(f"Sheet names: {pd.ExcelFile('data.xlsx').sheet_names}")--OUTPUT--Sheet names: ['Sheet1', 'Sheet2', 'Sheet3']

Excel workbooks can have multiple sheets, and you'll often need to target a specific one. The read_excel() function's sheet_name parameter gives you precise control over what data you load. You can specify the sheet you want in two ways:

- By name: Pass the exact sheet name as a string, such as

sheet_name='Sheet2'. - By index: Use the sheet's numerical position. Since it's zero-based,

sheet_name=1will load the second sheet in the workbook.

If you don't know the sheet names, you can quickly get a list of them with pd.ExcelFile('data.xlsx').sheet_names.

Reading specific rows and columns

import pandas as pd

# Read specific rows

df = pd.read_excel('data.xlsx', skiprows=2, nrows=3)

# Read specific columns

df_cols = pd.read_excel('data.xlsx', usecols="A,C:E")

print(df_cols.head(2))--OUTPUT--ID Age City

0 1 25 New York

1 2 30 Boston

Sometimes you don't need the entire dataset. The read_excel() function lets you selectively import data to save memory and simplify your DataFrame. You can control which rows and columns are loaded with specific parameters.

skiprowstells pandas to ignore a certain number of rows from the top of the file.nrowslimits the import to a specific number of rows after that.usecolslets you specify columns by name or range, like"A,C:E", to load only the data you need.

Using openpyxl for Excel operations

from openpyxl import load_workbook

workbook = load_workbook(filename='data.xlsx')

sheet = workbook.active

value = sheet.cell(row=1, column=2).value

print(f"Cell B1 contains: {value}")--OUTPUT--Cell B1 contains: Name

While pandas excels at data analysis, openpyxl offers more granular control over the workbook itself. It's the right tool when you need to interact with specific cells, formulas, or formatting—not just bulk data. This approach treats the file less like a dataset and more like an actual spreadsheet, requiring proper system dependencies to be installed.

- You use

load_workbook()to open the file andworkbook.activeto select the current sheet. - The

sheet.cell()method lets you pinpoint a cell by its row and column, and.valueextracts its content.

Advanced Excel reading methods

Moving beyond the basics, you can handle more intricate Excel files by using advanced options, parsing formulas, and even swapping out the underlying reading engine.

Using advanced pandas options

import pandas as pd

df = pd.read_excel(

'data.xlsx',

dtype={'ID': int, 'Age': int, 'Name': str},

converters={'City': lambda x: x.strip().upper()}

)

print(df[['Name', 'City']].head(2))--OUTPUT--Name City

0 Alice NEW YORK

1 Bob BOSTON

The read_excel() function offers powerful options for cleaning data as you load it, saving you from extra steps later. You can enforce specific data types and apply custom transformations on the fly, ensuring your DataFrame is clean from the start.

- The

dtypeparameter lets you define the data type for each column, like ensuringIDandAgeare integers (int). convertersapplies a function to a column. For example, you can use alambdafunction to automatically trim whitespace and convert city names to uppercase.

Working with Excel formulas

import pandas as pd

from openpyxl import load_workbook

wb = load_workbook('data.xlsx', data_only=False)

ws = wb.active

formula = ws['F1'].value

result = pd.read_excel('data.xlsx').iloc[0, 5]

print(f"Formula: {formula}, Result: {result}")--OUTPUT--Formula: =SUM(C1:E1), Result: 55

When your Excel file contains formulas, you might need either the formula's text or its calculated result. Python handles both, but you'll use different tools for each task.

- To get the actual formula string, like

=SUM(C1:E1), you useopenpyxl. Settingdata_only=Falseinload_workbook()is the key—it ensures you read the formula instead of its output. - To get the computed value, you can stick with

pandas. Itsread_excel()function automatically calculates the formula and gives you the final number.

Reading Excel files with multiple engines

import pandas as pd

# Using xlrd engine (legacy Excel files .xls)

df_xls = pd.read_excel('legacy.xls', engine='xlrd')

# Using openpyxl engine (default for .xlsx)

df_xlsx = pd.read_excel('data.xlsx', engine='openpyxl')

print("Excel files loaded successfully with appropriate engines")--OUTPUT--Excel files loaded successfully with appropriate engines

Under the hood, pandas relies on different libraries, or "engines," to parse Excel files. While it's smart enough to usually pick the right one based on the file extension, you can manually specify which to use with the engine parameter. This gives you direct control over how files are read.

openpyxl: The default engine for modern.xlsxfiles.xlrd: Required for handling older, legacy.xlsfiles.

Explicitly setting the engine is key for compatibility and can resolve issues when pandas can't infer the file type correctly.

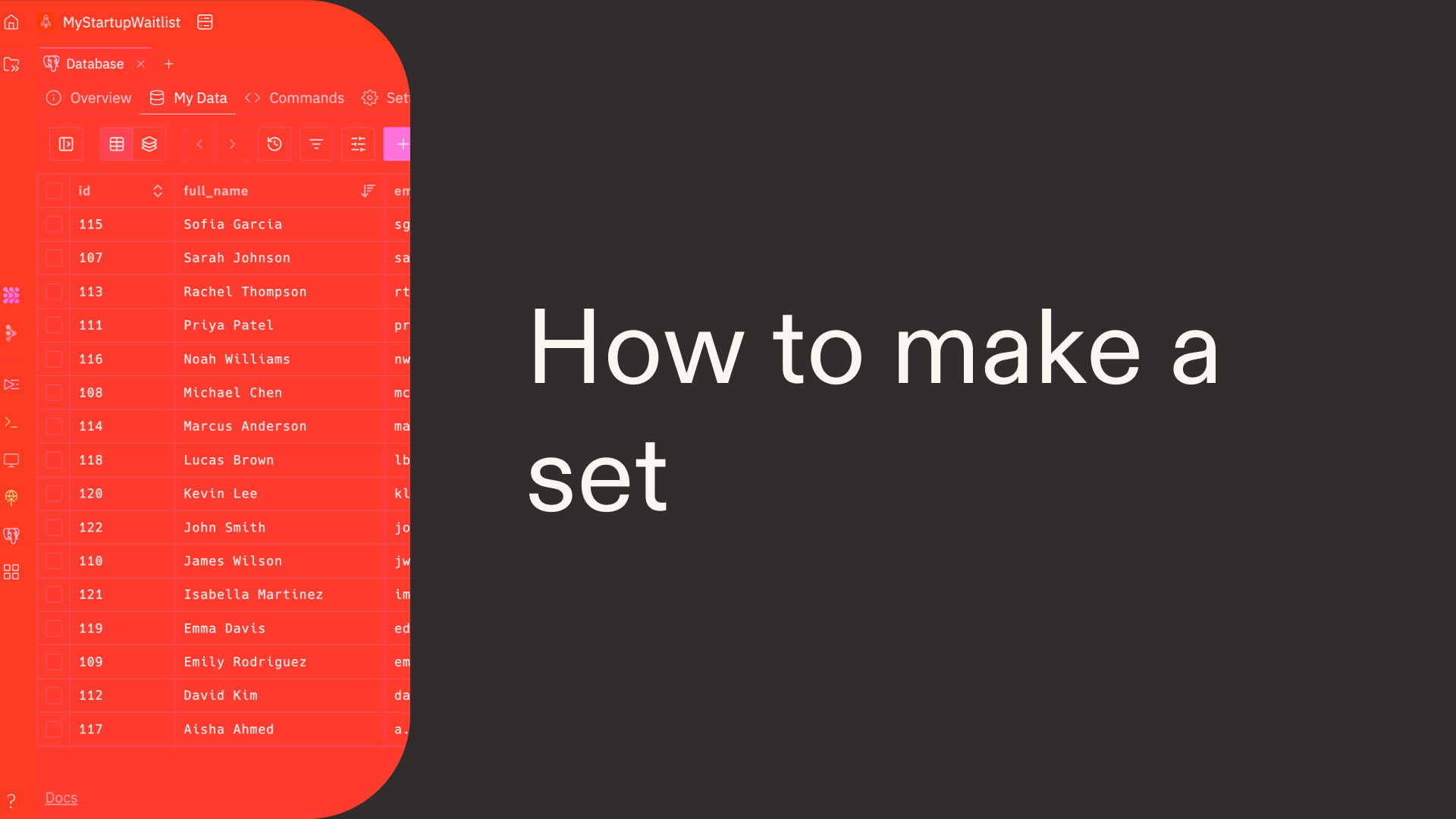

Move faster with Replit

Replit is an AI-powered development platform that comes with all Python dependencies pre-installed, so you can skip setup and start coding instantly. This lets you move from piecing together techniques to building complete applications faster.

Describe what you want to build, and Agent 4 handles everything—from writing the code to connecting databases and APIs, to deploying it live. It can take your idea for an Excel-based tool and turn it into a working product:

- A data dashboard that reads a multi-sheet Excel file to extract and visualize key performance indicators.

- An automated reporting tool that ingests raw sales data, cleans it using custom rules, and exports a formatted summary.

- A contact list importer that pulls specific columns like names and emails from a spreadsheet for database entry.

Simply describe your app, and Replit will write the code, test it, and fix issues automatically, all within your browser.

Common errors and challenges

You'll inevitably encounter data glitches when reading Excel files, but pandas provides simple solutions for the most common problems.

- Fixing numeric data read as strings: Sometimes a column of numbers gets imported as text. This usually happens if the column contains non-numeric characters. You can force pandas to interpret the column correctly by using the

dtypeparameter inread_excel()to specify the intended data type, such asdtype={'ID': int}. - Dealing with

NaNvalues: Empty cells in your spreadsheet becomeNaN(Not a Number) values in a DataFrame.NaNis the standard way pandas marks missing data. You can manage them by usingfillna()to replace them with a specific value ordropna()to remove the rows containing them altogether. - Resolving date parsing issues: Dates can be tricky and often load as text instead of proper datetime objects, which makes them useless for time-based analysis. The

parse_datesparameter is your solution. By passing a list of column names to it—for example,parse_dates=['JoinDate']—you tell pandas to intelligently convert those columns into a usable date format.

Fixing numeric data read as strings with dtype

A common snag is when a column of numbers gets read as text, often due to a stray character or inconsistent formatting. This prevents mathematical operations, since you can't sum text. The following code shows what happens when you try to use .sum() on a misread column.

import pandas as pd

# Numbers might be read as strings

df = pd.read_excel('financial_data.xlsx')

print(df['Revenue'].dtype)

# This fails because Revenue is a string

result = df['Revenue'].sum()

print(f"Total revenue: {result}")

The code fails because sum() can't perform arithmetic on text. It tries to concatenate the strings in the 'Revenue' column instead of adding them as numbers, which triggers an error. See how to fix this below.

import pandas as pd

# Explicitly set data types

df = pd.read_excel('financial_data.xlsx', dtype={'Revenue': float})

print(df['Revenue'].dtype)

result = df['Revenue'].sum()

print(f"Total revenue: {result}")

The fix is simple: use the dtype parameter in read_excel(). By passing dtype={'Revenue': float}, you tell pandas to treat the 'Revenue' column as numbers from the start. This ensures that mathematical operations like .sum() work as expected. This error often pops up when a numeric column contains stray text characters or inconsistent formatting, so it's a good first check when your math functions fail.

Dealing with NaN values in Excel imports

Missing data is a common hurdle in analysis. Pandas represents empty Excel cells as NaN (Not a Number) values, which can halt mathematical operations. The code below demonstrates what happens when you try to calculate an average with mean() on a column containing them.

import pandas as pd

# Empty cells become NaN by default

df = pd.read_excel('customer_data.xlsx')

# This raises error if Age column contains non-numeric values

average_age = df['Age'].mean()

print(f"Average age: {average_age}")

The mean() function fails because it can't compute an average on a column containing NaN values, which disrupt the calculation. The code below shows how to manage these missing values before performing calculations.

import pandas as pd

# Handle missing values during import

df = pd.read_excel('customer_data.xlsx', na_values=['N/A', ''], keep_default_na=True)

# Use dropna or fillna to handle NaN values

average_age = df['Age'].fillna(0).mean()

print(f"Average age: {average_age}")

The fix is to handle missing values before you run calculations. The na_values parameter in read_excel() lets you define custom placeholders for missing data, like 'N/A'. After loading, you can use fillna(0) to replace any NaN values with zero, which ensures that functions like mean() run without errors. This is a common step when your source data isn't perfectly clean and contains empty cells.

Resolving date parsing issues with parse_dates

Dates can cause headaches when they're read as text instead of proper date objects, which stops you from performing time-based calculations. The parse_dates parameter is the solution, but first, see what happens when you try to work with misread date data.

import pandas as pd

# Excel dates might be read incorrectly

df = pd.read_excel('dates.xlsx')

print(df['Date'].dtype)

# Attempting date operations can fail

next_day = df.loc[0, 'Date'] + pd.Timedelta(days=1)

This operation fails because Python can't add a pd.Timedelta to a column it reads as plain text. The data type is wrong for date arithmetic. The code below shows how to correct this as you load the file.

import pandas as pd

# Explicitly parse date columns

df = pd.read_excel('dates.xlsx', parse_dates=['Date'])

print(df['Date'].dtype)

# Now date operations work correctly

next_day = df.loc[0, 'Date'] + pd.Timedelta(days=1)

The solution is to use the parse_dates parameter. Passing parse_dates=['Date'] to read_excel() tells pandas to interpret the 'Date' column as a datetime object from the start. This corrects the data type, so you can perform time-based calculations like adding a pd.Timedelta without any issues. You'll need this fix whenever date columns are read as text, which often happens with inconsistent formatting in the source file.

Real-world applications

Now that you can clean and troubleshoot your data, you can confidently tackle complex, real-world analysis tasks.

Consolidating monthly reports from multiple Excel files

You'll often need to combine data from multiple Excel files, like monthly reports, into a single dataset for a complete analysis.

import pandas as pd

import glob

# Get all Excel files and combine them

excel_files = glob.glob('monthly_reports/*.xlsx')

all_data = [pd.read_excel(file).assign(Source=file.split('/')[-1]) for file in excel_files[:3]]

combined_df = pd.concat(all_data, ignore_index=True)

print(f"Combined data shape: {combined_df.shape}")

print(combined_df[['Source', 'Revenue']].groupby('Source').sum())

This approach efficiently combines data scattered across multiple Excel files. It first uses glob.glob() to gather a list of all spreadsheet file paths from a directory. Then, in a single line, it processes each file—perfect for vibe coding where you can describe complex data workflows in natural language:

- It reads the Excel data into a DataFrame using

pd.read_excel(). - It adds a new 'Source' column with

.assign()to keep track of which file the data came from. - Finally,

pd.concat()merges all the separate DataFrames into one master table, ready for analysis like the final.groupby()summary.

Analyzing financial data with groupby and apply

You can analyze financial performance across different business units by using groupby() to segment the data and agg() to calculate summary statistics for each group.

import pandas as pd

# Read financial data and calculate profit metrics by department

financial_data = pd.read_excel('financial_records.xlsx')

dept_summary = financial_data.groupby('Department').agg({

'Revenue': 'sum', 'Expenses': 'sum'

})

dept_summary['Profit_Margin'] = (dept_summary['Revenue'] - dept_summary['Expenses']) / dept_summary['Revenue'] * 100

print(dept_summary.sort_values('Profit_Margin', ascending=False).head(3))

This code transforms raw financial records into a concise summary. It segments the data by department before calculating key totals for each one.

- The

groupby('Department')method organizes the data into distinct groups. agg()then applies functions to these groups, summing upRevenueandExpenses.

A new Profit_Margin column is calculated from these totals. The final step sorts the departments to reveal the top three performers, giving you a quick look at which business units are most successful.

Get started with Replit

Turn these techniques into a real application. Tell Replit Agent: “Build a tool to merge monthly sales reports from Excel files” or “Create a dashboard that calculates profit margins from a financial spreadsheet.”

Replit Agent writes the code, tests for errors, and deploys your app. Start building with Replit.

Describe what you want to build, and Replit Agent writes the code, handles the infrastructure, and ships it live. Go from idea to real product, all in your browser.

Describe what you want to build, and Replit Agent writes the code, handles the infrastructure, and ships it live. Go from idea to real product, all in your browser.